Chapman’s Oscar-winning entry for Expo67 was commissioned by the province of Ontario. It uses a ‘multi-dynamic image’ technique – a phrase invented by Chapman to describe the use of ‘dynamic frames’ – filmed sequences that varied dynamically in size as they were projected, and the multiple use of these ‘screens’ or ‘panes’ within the vast screen he was using at Expo67 – a screen that measured 66 feet by 30 feet – (i-Max size). Remember that in 1967 computers weren’t ready to process this kind of media-making, so that Chapman had to use auditor’s printed spreadsheets to work out how his multi-dynamic film should be shot, how it should be storyboarded, and finally how the multi-image effect that he wanted should be described accurately enough for the Todd-AO optical-printing specialists in Hollywood to actually assemble all Chapman’s clips (180,000 feet of film) together – as he wanted – into an 18-minute multi-dynamic film.

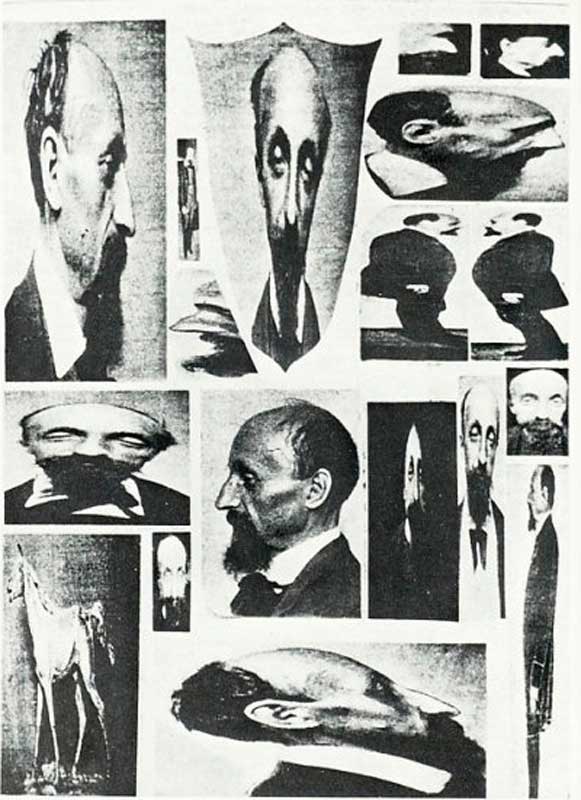

Chapman’s A Place to Stand was his first widely-promoted attempt to realise his multi-dynamic image approach. This is a fragment of the 70mmm film with sample images optically (photographically) printed as dynamic frames within the span of the 70mm frame.

A Place to Stand is a multi-image treatment – a motion-montage – about the province of Ontario. Chapman’s film content follows the ‘city symphony’ ideas of the 1920s (Ruttman: Berlin – Symphony of a Great City, and Vertov: Man With a Movie Camera, etc), and following the avant garde experimental approach of those early attempts to capture a physical space, Chapman’s film invents new techniques – a new form, in fact – presaging the digital compositing software that came into wide use in the last two decades.

BTW users of contemporary 21st century compositing software like Adobe AfterFX, Nuke, Maya Composite, Apple Motion etc, will find it hard to understand the level of difficulty facing Chapman in his quest for the multi-dynamic image form. It is relatively so easy today to assemble and view test composites in realtime, or after only a few minutes rendering time, and see the results on large flatscreen display monitors – as you are working. Try to imagine this kind of compositing being planned using a standard Movieola. This is how Chapman describes part of the process:

| “180,000 feet of film were shot. Some additional footage of material I had not time to shoot myself was shot by David Mackay, using TDF cameramen. After completely familiarising myself with the footage, I worked out a storyboard of the entire film. Although it was theoretical, it did give me an impression of how the subject matter could be structured. I then had to devise my own charts as did Barry Gordon who translated my charts into his own lab charts in a language that the lab could comprehend. The lab was most impressed with the clarity of Barry Gordon’s technical instructions. |

|

To edit the film I had a 2 picture head moviola which was the closest one could get to visualising the results. One could only use it to compare actions of any 2 shots at one time and designate the length of shots. In normal film editing, one works with the actual footage and soon discovers that frame or two on any shot can make a difference in rhythm. With the Ontario film I could never “see” the film develop. The charts indicated the movement of the shots. Because of the shortage in time their could be no changes in structure in any of the sequences once they returned from the lab. It was a tremendous discipline for me, for once I had made a creative decision, I could not change my mind. The entire concept of development therefore, was on paper in chart form.”from http://www.in70mm.com/news/2011/canadian_short/place/index.htm To edit the film I had a 2 picture head moviola which was the closest one could get to visualising the results. One could only use it to compare actions of any 2 shots at one time and designate the length of shots. In normal film editing, one works with the actual footage and soon discovers that frame or two on any shot can make a difference in rhythm. With the Ontario film I could never “see” the film develop. The charts indicated the movement of the shots. Because of the shortage in time their could be no changes in structure in any of the sequences once they returned from the lab. It was a tremendous discipline for me, for once I had made a creative decision, I could not change my mind. The entire concept of development therefore, was on paper in chart form.”from http://www.in70mm.com/news/2011/canadian_short/place/index.htmThere’s a short clip of Chapman’s film here: So like graphic designers of the time, Chapman had to provide the optical printing lab at ToddAO with a set of written instructions and multi-image storyboards, then wait for several days or weeks while the Lab constructed his multi-dynamic film. Nowadays we can visualise this more or less in realtime. What lucky bastards we are!

|