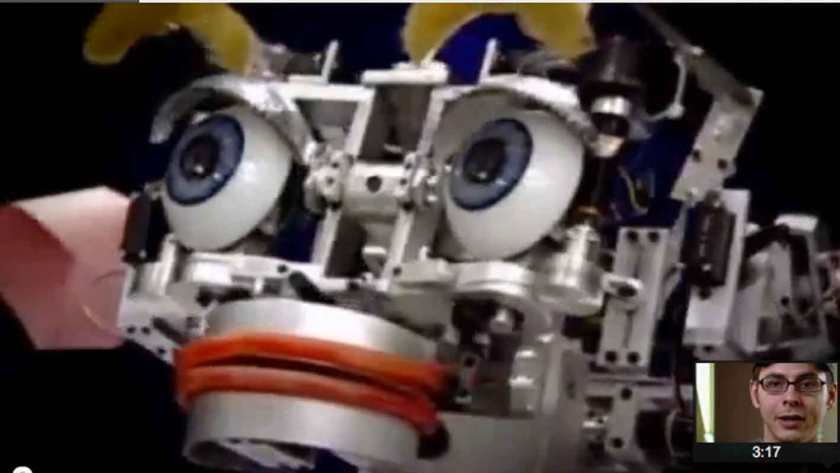

Cynthea Brazeal is associate professor of Media Arts and Sciences at MIT. She is a pioneer of social robotics – the investigation of how to make a robot capable of responding to social intercourse with humans with some of the signature facial expressions that mirror the ‘human qualities’ of social exchange, understanding, and empathy. Kismet was an early illustration of Brazeal’s doctoral thesis – on Affective Robotics – at MIT AI Lab from 1999.

“Kismet is an expressive robotic creature with perceptual and motor modalities tailored to natural human communication channels. To facilitate a natural infant-caretaker interaction, the robot is equipped with visual, auditory, and proprioceptive sensory inputs. The motor outputs include vocalizations, facial expressions, and motor capabilities to adjust the gaze direction of the eyes and the orientation of the head. Note that these motor systems serve to steer the visual and auditory sensors to the source of the stimulus and can also be used to display communicative cues.”

(http://www.ai.mit.edu/projects/sociable/baby-bits.html)

Considerable effort in the research community has been put to the development of human-like robots, the smart (AI-based) software that drives them, and making the prosthetic ‘front-end’ – the eyes, face, mouth, voice etc – that is the effective and hopefully affective user-interface of the robot – what you see, how you gauge understanding, how you apply the Turing test etc. David Hanson (of Hanson Robotics) has produced some remarkable prototypes illustrating progress in this area since Kismet.

What exactly is the relevance of Kismet to the world of film?

We are heading rapidly towards the scenario in which sophisticated CGI and AI software – a development of what is happening right now in Pre-Viz and Games software – enables us to create feature-length movie-experiences in which several or even most of the protagonists are not just soft-machines (like us humans), but are really software-machines – a CGI developed ultra-realistic humanoid soft-robot or cyborg equipped with a sophisticated software-brain, with a built-in expert-system style memory retrieval mechanism, chatbot-style conversational capabilities, and other AI allowing it to keep in-character, and play its part in relevant plot development. Importantly these synthespians, virtual-actors (vactors) or digital dramaturges, will be equipped with the kind of algorithms developed by Cynthia Brazeal and David Hanson – they will be able to assume human characteristics, facials expressions and affective responses relevant to their fictional characters. Eventually of course, real actors will sell the software rights to their individual portfolio of personal motion-capture-data, expression-capture data, likeness-data, allowing movie-makers to cast (say) Richard Burton (in his prime) with Gloria Swanson (in her prime) with Peter Lorre (at his best) and Brad Pitt (at 20 years old) together in the same movie – the ultimate casting machine. Bit-parts and crowd scenes supplied by future generations of Massive Prime’s crowd-simulator, the whole locked together into movies using the catalog of virtual cinematography effects that we have already begun to develop.